Just over a year has passed since we originally launched Meta, let Palestine Speak, two years since the May Uprisings of 2021 which spurred an Independent Human Rights Due Diligence report into Meta’s practices in Israel and Palestine, and over 3 years since Facebook, We Need to Talk . In each of these moments, a collective of voices including civil society organizations, digital rights experts, independent auditors, elected officials, and regular users of social media have joined in a call to raise the alarm around Meta’s discriminatory treatment of Palestinian users and content. Regrettably, today, we find ourselves in a situation where there is still much work to be done. The distressing reality is that across Meta’s platforms, Palestinian voices and narratives continue to be disproportionately silenced and censored.

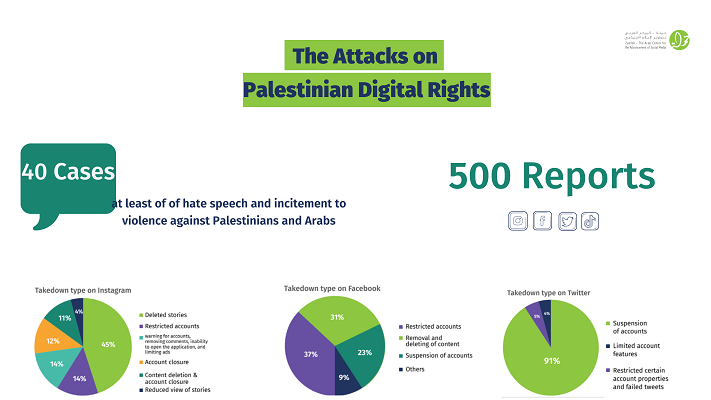

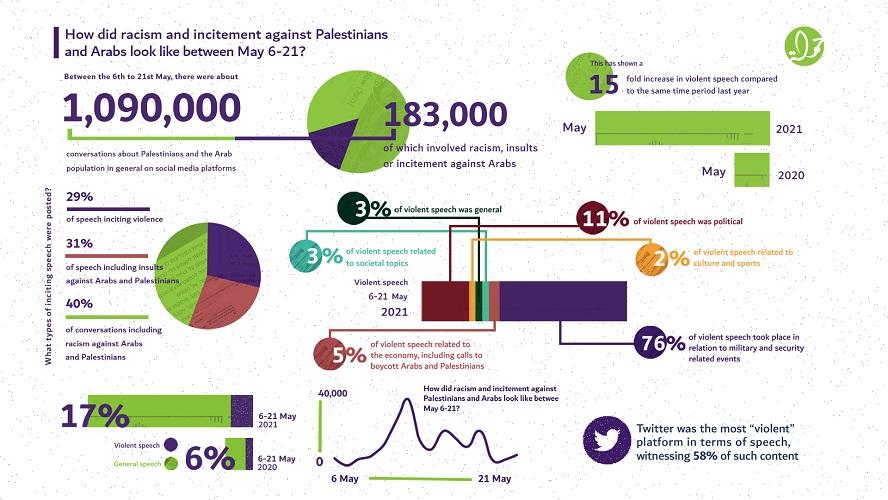

As the largest social media company in the world, Meta allows billions of people around the globe to connect with each other, share opinions, and learn about the world. However, Meta is not that place for millions of Palestinians and advocates for Palestinian human rights worldwide. Their content and accounts are censored and over-moderated at disproportionately high rates. This dynamic illustrates the systemic bias and resulting over-enforcement of content moderation policies on Palestinian content. Meanwhile, Israeli content in Hebrew is insufficiently moderated, leading to hate speech and incitement of violence against Palestinians and Arabs spreading widely across the platforms

Double standards in content moderation policies are silencing the Palestinian narrative and violating Palestinian digital rights. This creates a chilling effect among Palestinians and citizens advocating for international law worldwide. Furthermore, over-moderation erases the documentation of human rights violations, thus dissuading political participation as a result of the systematic muzzling of Palestinians online. According to our research, two-thirds of Palestinian youth are afraid to voice their political opinions online. This negatively influences public opinion about the Palestinian freedom struggle by spreading stereotypes of Palestinians, while simultaneously preventing them and their allies globally from raising awareness for their cause. Meta must stop silencing Palestinian voices and narratives. Content moderation policies need to be equal, objective, transparent, and clear for all.

4.png)

.png)